Yann LeCun, Meta’s chief scientist, believes current AI is far from sentience, according to CNBC, contrasting Nvidia CEO Jensen Huang’s view that AI will rival human intelligence in under five years.

“I know Jensen,” LeCun recently remarked at an event celebrating the 10th anniversary of Facebook’s Fundamental AI Research team, noting that the Nvidia CEO stands to benefit significantly from the current surge in AI interest. “There is an AI war, and he’s supplying the weapons.”

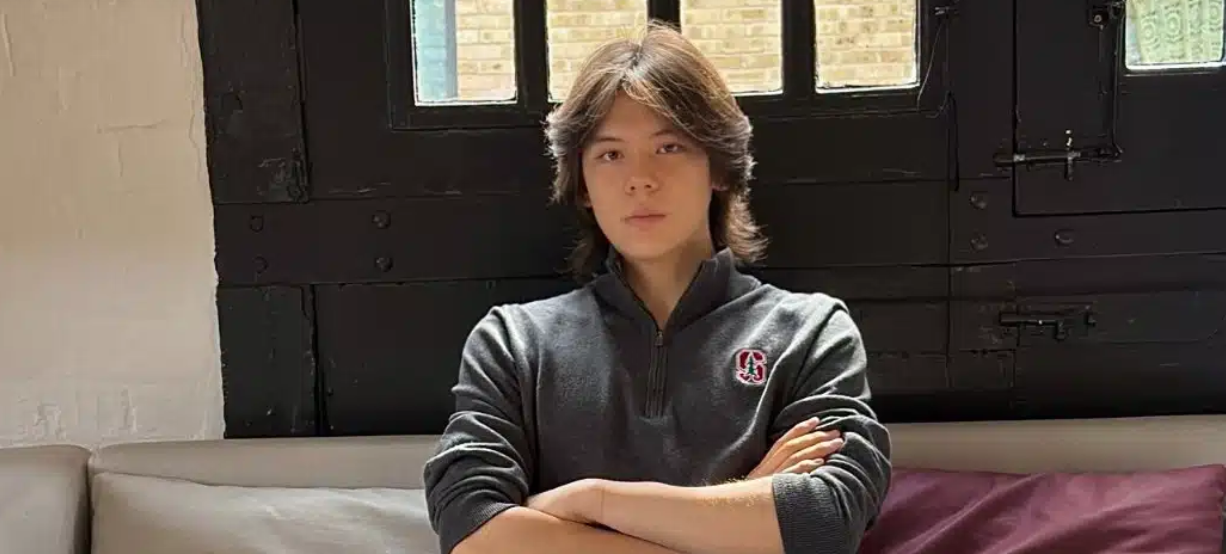

LeCun — who along with his duties at Meta — is a Silver Professor at New York University, specializing in Data Science, Computer Science, Neural Science, and Electrical Engineering. His affiliations include NYU’s Center for Data Science, Courant Institute of Mathematical Science, Center for Neural Science, and the Department of Electrical and Computer Engineering.

On the efforts of technologists, including those at OpenAI, to create artificial general intelligence (AGI) that matches human intelligence, LeCun said: “[If] you think AGI is in, the more GPUs you have to buy” while also noting that as these research pursuits intensify, the demand for Nvidia’s computer chips will continue to grow.

LeCun foresees “cat-level” or “dog-level” AI preceding human-level AI. He emphasizes that language models alone won’t achieve advanced AI, leading Meta to explore transformer models handling diverse data like audio, images, and video. This approach could unlock more complex AI capabilities.

Meta’s projects include augmented reality tennis training using Project Aria glasses, which require AI models integrating 3D visuals, text, and audio. Such multimodal AI systems are costly and complex, potentially benefiting Nvidia, a key player in AI hardware with its GPUs widely used in AI training, like Meta’s Llama AI software trained on Nvidia’s A100 GPUs.

Regarding hardware, the tech industry might need more providers as AI advances. LeCun also voiced skepticism about quantum computing’s near-term impact. He sees quantum computing as having potential but distant relevance to current AI developments.

“Text is a very poor source of information. Train a system on the equivalent of 20,000 years of reading material, and they still don’t understand that if A is the same as B, then B is the same as A,” he said, adding that the volume of text used to train modern language models is so vast, it would take a human approximately 20,000 years to read it all. “There’s a lot of really basic things about the world that they just don’t get through this kind of training.”

LeCun stated that while GPU technology isn’t strictly necessary, it remains the gold standard in AI. He anticipates future computer chips will evolve beyond traditional GPUs, becoming specialized neural, deep learning accelerators. Additionally, he expressed skepticism about the practicality of quantum computing, noting that classical computers can solve many problems more efficiently. LeCun finds quantum computing scientifically fascinating but is uncertain about its practical relevance and the feasibility of creating useful quantum computers.

Featured image: Credit: New York University