Insider Brief

- xAI revealed that it has begun training its large language models (LLMs) on the Memphis Supercluster.

- The supercluster is equipped with 100,000 liquid-cooled H100 graphics processing units (GPUs) from Nvidia.

- Local media sources are reporting that xAI has yet to secure a contract with the Tennessee Valley Authority (TVA) for electricity provision.

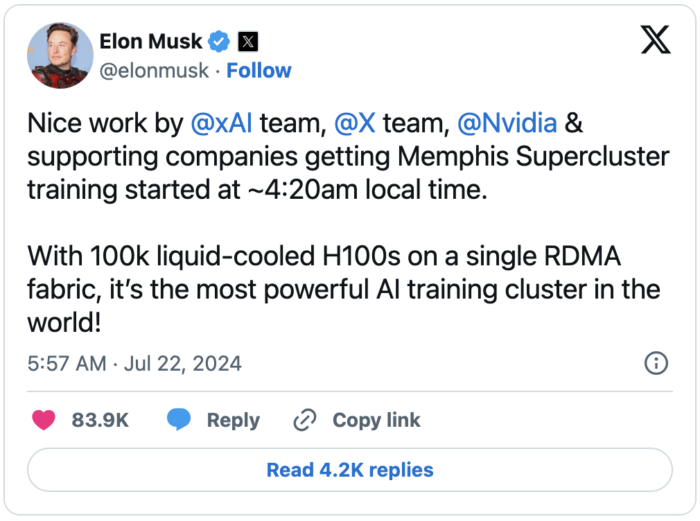

xAI revealed that it has begun training its large language models (LLMs) on the Memphis Supercluster, the “most powerful AI training cluster in the world,” Elon Musk said on the social media platform X.

The Memphis-based supercluster, located in the southwestern part of the city, represents the largest capital investment by a new-to-market company in Memphis’s history, according to WREG, a local news outlet.

The supercluster boasts an impressive 100,000 liquid-cooled H100 graphics processing units (GPUs) from Nvidia, which were introduced last year, Venture Beat is reporting. These high-demand chips are essential for AI model providers, including xAI’s competitors such as OpenAI. Additionally, the supercluster operates on a single Remote Direct Memory Access (RDMA) fabric, a technology noted by Cisco for enabling more efficient and lower latency data transfer between compute nodes without overburdening the central processing unit (CPU).

Despite this significant technological leap, xAI has yet to secure a contract with the Tennessee Valley Authority (TVA) for electricity provision. The TVA requires such agreements for projects exceeding 100 megawatts, posing a potential hurdle for the supercluster’s full operational capacity.

Despite this significant technological leap, xAI has yet to secure a contract with the Tennessee Valley Authority (TVA) for electricity provision. The TVA requires such agreements for projects exceeding 100 megawatts, posing a potential hurdle for the supercluster’s full operational capacity.

Elon Musk, founder of xAI, highlighted the supercluster’s capabilities and future ambitions in his announcements. xAI is an artificial intelligence company founded by Musk. The company offers its LLMs, known as Grok, and a chatbot of the same name to paid subscribers through the X platform (formerly known as Twitter). xAI aims to compete with other major AI developers like OpenAI.

Musk said that the Memphis Supercluster would give xAI a “significant advantage” in training the world’s most powerful AI models by every metric. Musk set an ambitious goal, aiming to achieve this feat by December this year.

Training LLMs

Training large language models (LLMs) like those developed by xAI involves massive datasets, high-performance hardware and substantial energy consumption.

The process requires vast amounts of text data, sophisticated preprocessing and parallel processing across thousands of GPUs. xAI’s Memphis Supercluster, with its 100,000 liquid-cooled H100 GPUs, gives some idea of the scale of infrastructure needed. The training process includes multiple iterations over the data, optimization algorithms and balancing between overfitting and generalization. Overfitting happens when a model learns the training data too closely, including noise and outliers, resulting in poor performance on new data, while generalization refers to the model’s ability to perform well on unseen, real-world data by capturing the underlying patterns rather than the specific details of the training set.

Ethical considerations, such as mitigating biases, are also crucial. Post-training, models undergo fine-tuning and require robust deployment infrastructure.

Venture Beat and other tech industry watchers have noted the competitive landscape of AI model development, with xAI positioning itself aggressively against established players. The deployment of such a vast and advanced infrastructure shows Musk’s commitment to leading the AI race.

The Memphis Supercluster’s development also marks a significant move for the city of Memphis, promising not only technological advancements but also economic impact due to the scale of investment. However, the pending contract with the TVA remains a critical factor for the project’s success.