Insider Brief

- Generative adversarial networks (GANs) have experience rapid progress, but face stability and efficiency issues.

- Chung-Ang University researchers have now developed a new strategy to tackle this problem with GANs, employing kernel functions and histogram transformations.

- The researchers say this offers improved performance and flexibility to adapt to different data types and objectives.

PRESS RELEASE — Within the arena of artificial intelligence and deep learning, generative adversarial networks (GANs) have garnered significant attention, owing to their rapid progress. GANs are particularly suited for generating images, music, and text. However, existing GAN models suffer from stability and efficiency issues. To address them, researchers from Chung-Ang University have now developed a new strategy, employing kernel functions and histogram transformations, demonstrating improved performance and flexibility to adapt to different data types and objectives.

In recent years, artificial intelligence (AI) and deep learning models have advanced rapidly, becoming easily accessible. This has enabled people, even those without specialized expertise, to perform various tasks with AI. Among these models, generative adversarial networks (GANs) stand out for their outstanding performance in generating new data instances with the same characteristics as the training data, making them particularly effective for generating images, music, and text.

GANs consist of two neural networks namely, a generator that creates new data distributions starting from random noise, and a discriminator which checks whether the generated data distribution is “real” (matching the training data) or “fake.” As training progresses, the generator improves at generating realistic distributions, and the discriminator at identifying the generated data as fake. GANs use a loss function to measure differences between the fake and real distributions. However, this approach can cause issues like gradient vanishing and unstable learning, directly impacting stability and efficiency.

Despite considerable progress in improving GANs, including structural modifications and loss function adjustments, challenges such as gradient vanishing and mode collapse, where the generator produces a limited variety, continue to limit their applicability.

To address these issues, a team of researchers led by Assistant Professor Minhyeok Lee from the School of Electrical and Electronics Engineering at Chung-Ang University, Republic of Korea developed a novel strategy. “Imagine teaching an artist to paint landscapes. Consistent guidance may lead them to produce similar scenes, a phenomenon called mode collapse in machine learning. To prevent this, our PMF-GAN model refines the discriminator’s capabilities, penalizing the generator for producing overly similar outputs, thereby promoting diversity,“explains Dr. Lee. Their findings were made available online on July 18, 2024, and published in Volume 164 of the journal Applied Soft Computing in October 2024.

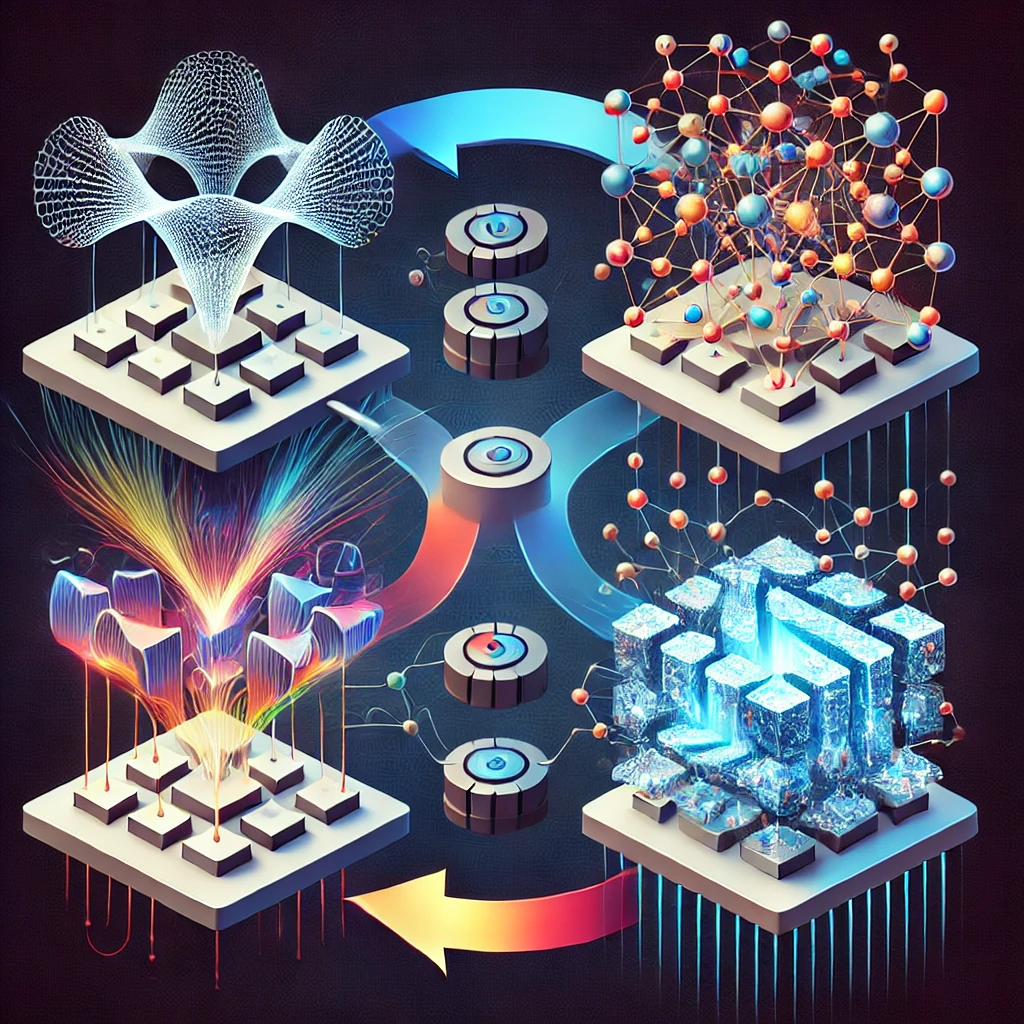

The PMF-GAN framework introduces two key enhancements. First, it employs kernel optimization to refine the ability of the discriminator, offering a significant advantage in addressing issues of model collapse and gradient vanishing. Kernels are mathematical functions that transform data into a higher dimensional space, making it easier to detect patterns even in complex data. The output of the discriminator is processed through kernel functions, producing the kernel density estimation (KDE). Second, PMF-GAN applies a mathematical technique called histogram transformation to the KDE output, enabling a more intuitive analysis of the results. During training, the model minimizes the difference between the kernel-histogram transformed fake and real distributions, a measure called PMF distance.

Specially, this approach allows for the use of various mathematical distance functions and kernel functions. This flexibility allows PMF-GAN to be adapted to different data types and learning objectives. Additionally, PMF-GAN can be integrated into existing improved GAN architectures for even better performance. In experiments, PMF-GAN outperformed several baseline models in terms of visual-quality and evaluation metrics across multiple datasets. For the Animal FacesHQ dataset, it showed a 56.9% improvement in the inception score and 61.5% in the fréchet inception distance (FID) score compared to the conventional WGAN-GP model.

“The flexibility and performance improvements presented by PMF-GAN opens new possibilities for generating synthetic data in various technological and digital fields. In healthcare, it will lead to more stable and diverse image generation. It also enables more realistic and varied computer-generated visuals for films, video games, and virtual reality experiences,” remarks Dr. Lee. Further, “As AI-generated content becomes more prevalent in our daily lives, our method improves the quality and diversity of the content, and will ensure that AI continues to be a valuable tool for human creativity and problem-solving,” concludes Dr. Lee.