Insider Brief

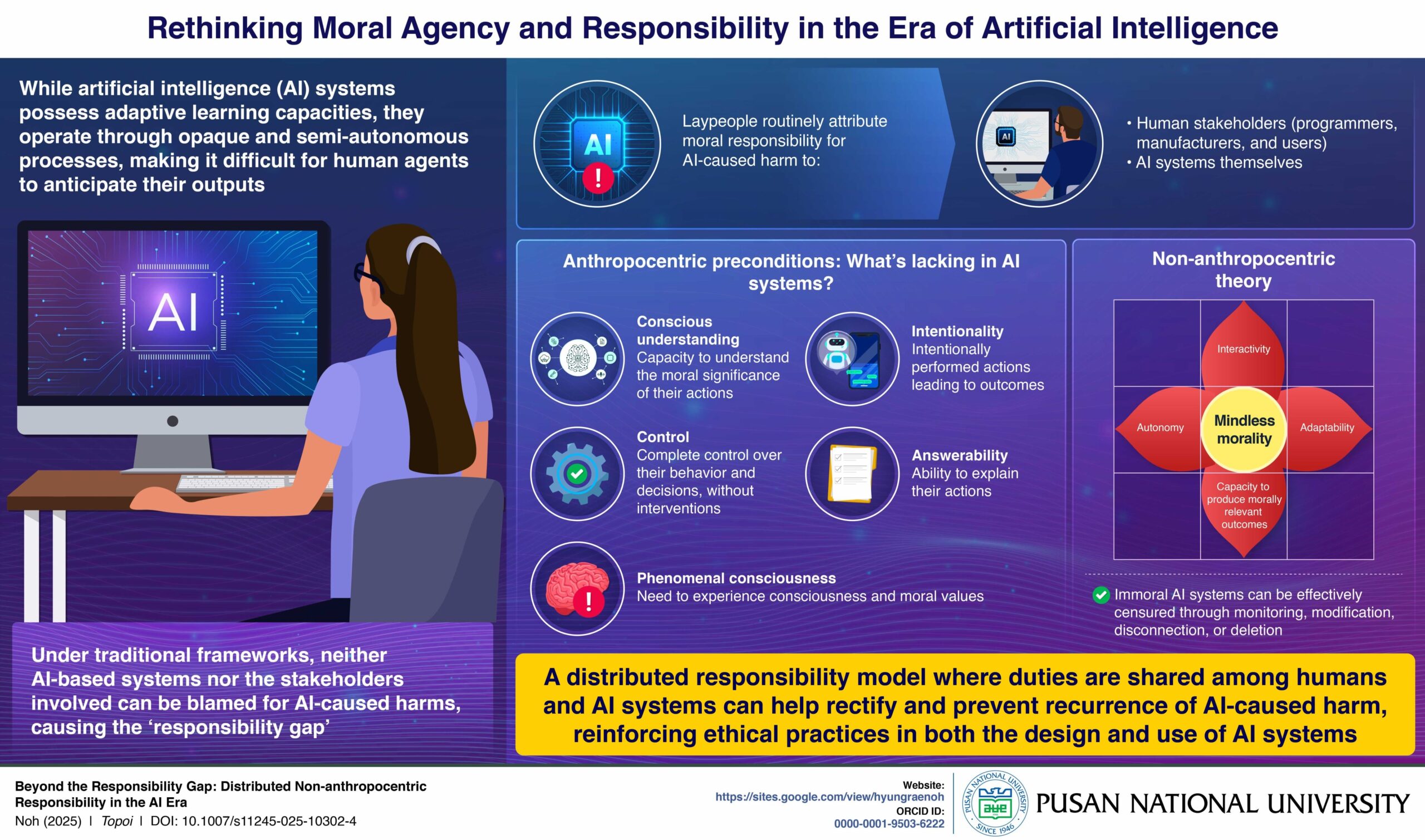

- A study from Pusan National University argues that responsibility for AI-caused harm should be distributed across both human stakeholders and autonomous systems, challenging traditional ethical models centered on intention and free will.

- Led by assistant professor Hyungrae Noh and published in Topoi, the research examines how semi-autonomous AI systems create a “responsibility gap” when harm occurs without malicious intent and outside human foresight.

- The study proposes a networked model of responsibility—spanning developers, users, institutions, and AI systems with operational autonomy—as a more realistic framework for mitigating and preventing AI-mediated harm.

When AI causes harm, who is to blame?

This is the question researchers from Pusan National University tackled in a recent study that argues responsibility for AI-caused harm must be shared between humans and the systems they build, challenging long-standing ethical models that rely on intention, free will, and other human mental capacities. The work, published in Topoi and supported by the university’s philosophy program, examines how traditional frameworks fail when applied to highly autonomous technologies.

According to the university, the study, led by Hyungrae Noh, an assistant professor of philosophy at Pusan National University, explores the growing number of incidents in which AI systems cause harm despite no malicious intent from developers or users. Because these systems operate semi-autonomously and in ways even their creators cannot fully predict, the research argues that holding any single group responsible — engineers, operators, or the AI itself — is insufficient. Noh’s analysis describes this problem as a structural gap in modern AI ethics.

“Instead of insisting traditional ethical frameworks in contexts of AI, it is important to acknowledge the idea of distributed responsibility,” Dr. Noh. noted. “This implies a shared duty of both human stakeholders — including programmers, users, and developers — and AI agents themselves to address AI-mediated harms, even when the harm was not anticipated or intended. This will help to promptly rectify errors and prevent their recurrence, thereby reinforcing ethical practices in both the design and use of AI systems.”

At the center of the findings is the claim that conventional responsibility models depend on traits such as conscious awareness, intentionality, and the ability to understand the moral weight of one’s actions. According to Noh’s study, AI systems lack these capacities. They do not form intentions, do not possess subjective experience, and do not operate with fully transparent decision processes. For these reasons, the research concludes that AI cannot be assessed under the same moral standards used for humans, leaving a mismatch between the harms that occur and the frameworks available to address researcheers referrred to as the responsibility gap in AI.

The paper expands on this critique by examining non-anthropocentric theories of agency, especially work by philosopher Luciano Floridi and others who propose a distributed model of responsibility, the university pointed out Rather than treating responsibility as an attribute assigned to one actor, this model frames it as a networked duty shared across developers, operators, institutions, and AI systems with meaningful autonomy. Under this approach, humans have an ongoing obligation to monitor, adjust, and deactivate systems that exhibit harmful behavior, while autonomous AI systems may also bear limited responsibilities tied to their operational capacities.

Noh’s study argues that this distributed model is better suited to real-world scenarios where harm emerges from complex interactions between algorithms, data, context, and human oversight. By spreading responsibility across the full chain of stakeholders, the model attempts to close the gap created when no single actor can be said to have intended the harmful outcome. The study frames this as a practical shift rather than a philosophical abstraction, emphasizing the need for rapid mitigation and continuous oversight in both design and deployment.

The research also situates these ideas within broader questions about how societies should assign accountability in an era when AI systems influence transportation, medicine, education, and employment. Noh highlights cases in which AI harms occur despite adherence to standard development practices, noting that such examples challenge traditional expectations of blame and liability.

The study’s methods rely on philosophical analysis, evaluation of existing ethical models, and engagement with emerging empirical work on AI autonomy. Unlike computational or experimental research, the paper draws on conceptual analysis to map the limits of current responsibility frameworks. The author identifies the inability to explain responsibility attribution in unpredictable AI behavior as a central limitation of existing theories.

“With AI-technologies becoming deeply integrated in our lives, the instances of AI-mediated harm are bound to increase,” Dr. Noh said. “So, it is crucial to understand who is morally responsible for the unforeseeable harms caused by AI.”