Insider Brief

- AI chip startup Fractile raised $220 million to develop hardware designed to accelerate AI inference workloads and reduce processing bottlenecks in advanced AI systems.

- The funding round was led by Accel, Factorial Funds and Founders Fund, with participation from several additional venture capital firms and existing investors.

- Fractile said current AI workloads involving tens of millions of tokens can take weeks to complete on existing hardware, creating demand for faster and more efficient inference systems.

London-based AI chip startup Fractile announced in a company blog post it raised $220 million in a funding round led by Accel, Factorial Funds and Founders Fund as investors continue pouring capital into hardware designed to support the growing computational demands of artificial intelligence.

The company said the funding will be used to accelerate development of its first AI chips and systems and move them into customer deployments. Additional investors in the round included Conviction, Gigascale, O1A, Felicis, Buckley Ventures and 8VC.

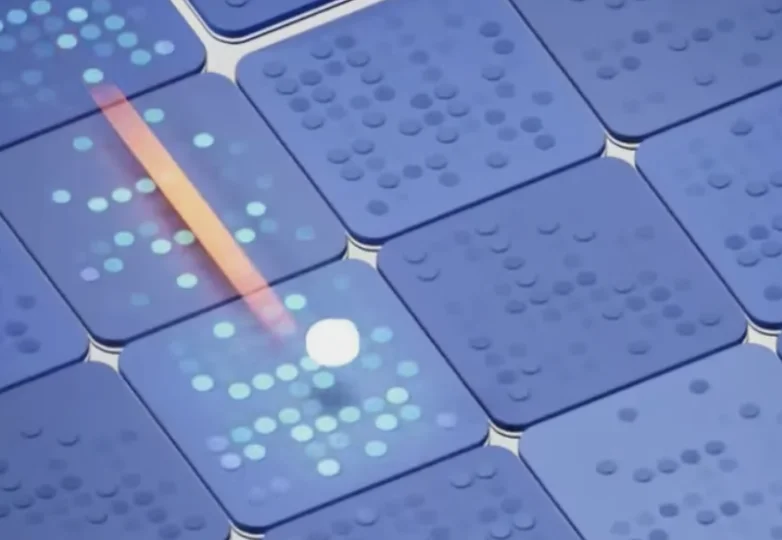

Founded in 2022, Fractile is developing semiconductor hardware aimed at speeding up AI inference, the process by which trained AI models generate responses and predictions after deployment. The company argues that inference speed is becoming a major bottleneck as advanced AI systems generate increasingly long outputs and consume larger amounts of computing power.

The financing comes amid an industry-wide push to redesign AI infrastructure as developers move beyond training ever-larger models and toward running them efficiently at scale. While much of the current AI hardware market is dominated by graphics processing units, or GPUs, startups and large technology companies alike are pursuing specialized architectures aimed at lowering cost, reducing latency and improving energy efficiency for inference workloads.

Fractile said some frontier AI systems are already generating outputs containing tens of millions of tokens, the units of text processed by large language models. According to the company, those workloads can take weeks to complete on current hardware because of limits tied to memory bandwidth and data movement inside chips.

The company said current systems typically generate around 40 tokens per second for such large workloads, while more advanced use cases may require output speeds closer to 1,200 tokens per second to reduce processing times from weeks to days.

Fractile is positioning its technology around what the company describes as “long-context” AI workloads, in which models process and generate very large chains of reasoning over extended sequences of text and intermediate outputs. The company linked those capabilities to emerging applications in software engineering, scientific research, drug discovery and materials science.

The company also framed the challenge as an economic issue for the broader AI sector. Inference has become one of the largest operating costs for AI providers as consumer and enterprise applications scale. Faster and cheaper inference systems could allow AI developers to run more complex workloads without sharply increasing computing expenses.

Fractile said it has been working across multiple layers of the AI hardware stack, including chip architecture, semiconductor manufacturing processes and AI systems research. The company did not disclose when its first commercial chips are expected to ship or identify manufacturing partners.

The announcement reflects continued investor appetite for AI infrastructure companies despite growing concerns about rising capital expenditures and competition in the semiconductor sector. Since the launch of generative AI systems such as OpenAI’s ChatGPT, venture capital firms and major technology companies have committed billions of dollars toward AI chips, networking equipment, memory systems and data center expansion.

Fractile added it is hiring across offices in London, Bristol, San Francisco and Taipei as it expands development of its hardware platform.