Insider Brief

- A new working paper finds that AI coding agents are associated with a 39% increase in software output after becoming the default workflow.

- Experienced workers accept agent-generated code at higher rates and are more likely to use planning-oriented prompts that improve alignment with user intent.

- Non-engineering roles such as design and product management show high adoption levels, indicating that AI agents are enabling specialist tasks outside traditional technical teams.

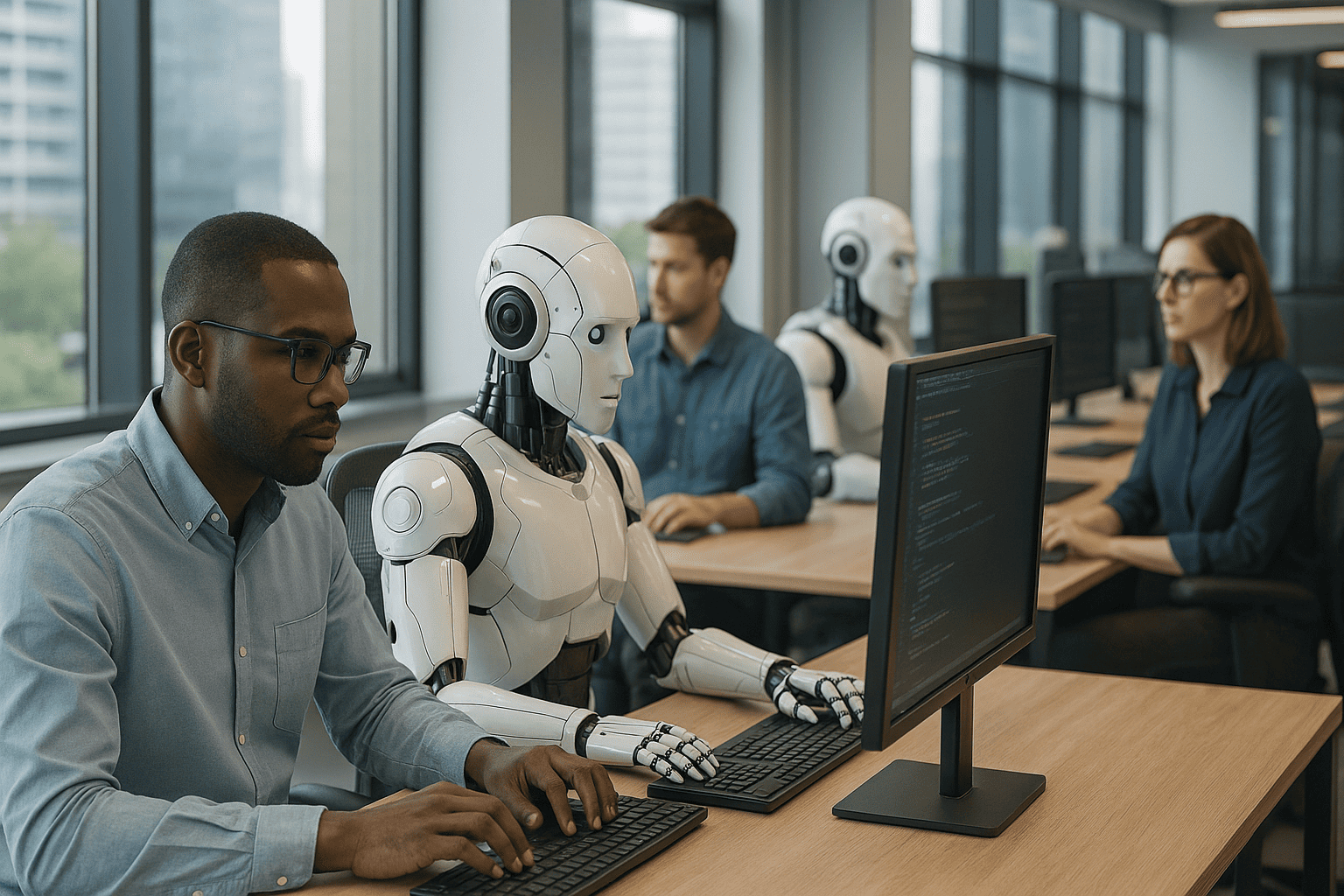

A new working paper suggests that the first real productivity jump from artificial intelligence may already be happening—just not where most people expected.

As economists argue over whether generative AI is a transformative technology or an over-promised assistant, early field evidence from software developers points in a different direction. Suproteem K. Sarkar, a researcher at the University of Chicago Booth School of Business, conducted an analysis of the rollout of a coding agent across 1,000 organizations finds that firms using the tool saw a 39% increase in weekly code merges after it became the default workflow.

Those results may challenge the prevailing narrative that AI has yet to move the economic needle.

The study focuses on Cursor Agent, a coding tool that turns natural-language instructions into software changes and can search codebases, run tests, and call external tools. While AI copilots and autocomplete features have existed for years, this study tracks the adoption of a fully agentic system—one that can carry out multi-step tasks rather than merely suggest code fragments.

A Rare Productivity Signal in the Wild

Empirical evidence of AI-related productivity shifts has been scarce, despite strong predictions that AI would accelerate knowledge work. What makes these findings notable is that they stem from a natural experiment: the coding agent was rolled out in November 2024 and became the default generation mode in February 2025. In the 15 weeks that followed, organizations already using the platform saw software output rise dramatically, which far outpaces untouched control groups.

At the same time, quality didn’t deteriorate. Revert rates and bugfix rates did not increase, suggesting that developers weren’t papering over problems with fragile, machine-generated code.

The results point to something more important than incremental automation: developers offloaded low-level implementation work and spent more time specifying goals and evaluating output. The paper describes this as a shift from syntactic work to semantic work, where workers focus less on typing and more on communicating intent.

One of the study’s most surprising findings is the reversal of the traditional AI skill gradient. Historically, AI tools have helped junior workers more than senior ones. Autocompletion tools, for instance, are disproportionately used by younger programmers.

But not with agents.

Experienced workers in this study were 5–6% more likely to accept agent-generated code for every standard deviation increase in work experience.

They also tended to begin their agent conversations with planning instructions, laying out objectives, alternatives, and steps– something junior developers did far less frequently. These “plan-first” messages were correlated with higher accept rates, hinting that clarity and foresight may be emerging as the central skills in AI-augmented work.

This contradicts the assumption that AI will level the field by enabling novices to perform at expert levels. Instead, the data suggests that expertise improves the ability to delegate to AI, while experience helps workers form better mental models of what agents can and cannot do.

Non-Engineers Are Becoming Software Producers

Although the majority of agent users are software developers, the study finds heavy adoption among people in roles that previously relied on engineers for implementation—including designers, consultants, and product managers. Designers submit more messages per week than engineers, and accept generated code more frequently.

This is another potential productivity tailwind: AI appears to be dissolving some of the functional boundaries inside tech organizations. Workers who once communicated requirements through tickets or mockups can now generate and deploy working prototypes themselves, accelerating iteration cycles.

The data also offers a window into how knowledge work is changing. More than a third of agent prompts involve planning, explaining code, or diagnosing errors rather than writing features outright.

The study suggests that agents have become quasi-colleagues: people ask them to reason, justify, reflect and plan. This dynamic mirrors earlier shifts in industrial production, when machinery automated manual tasks and forced workers to engage in more conceptual decision-making. Agents appear to be initiating a similar transition in digital labor.

A Counterpoint to the ‘AI Isn’t Improving Productivity’ Narrative?

For months, economists have questioned why the surge in corporate AI investment hasn’t shown up in aggregate productivity statistics. The working paper offers one potential answer: productivity gains may already be occurring inside specific task categories and early-adopter firms, and have not yet scaled widely enough to influence macroeconomic indicators.

The results also align with historical patterns seen during the adoption of earlier general-purpose technologies. Productivity often lags while organizations figure out how to reorganize workflows and redesign processes around new tools—and then accelerates once complementarities emerge.

The fact that the productivity bump appeared within weeks of the agent becoming the default mode suggests that generative agents may require less organizational restructuring than prior breakthroughs.

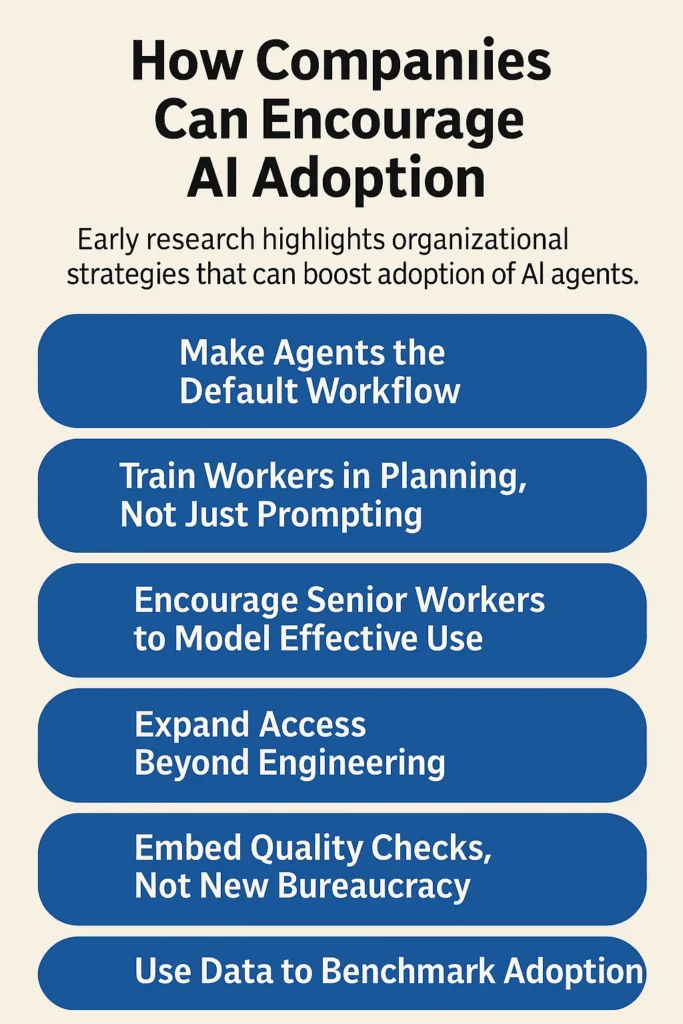

How Companies Can Encourage AI Adoption — Evidence-Based Steps

While the study is not a management guide, its findings point to several actionable steps organizations can take to accelerate adoption and capture AI-related productivity gains. One clear pattern is the impact of defaults. The sharpest increase in output occurred only after the coding agent shifted from an optional feature to the system’s standard workflow, suggesting that embedding AI directly into core tools—rather than offering it as an add-on—may matter more than executives expect.

The data also shows that how employees interact with agents shapes the quality of the output they accept. Workers who began conversations by laying out a plan — defining goals, outlining steps, or anticipating possible failure points — were more likely to accept the code an agent produced. That pattern has led some researchers to argue that companies should focus less on generic “prompt engineering” and more on teaching employees how to structure tasks and preview instructions before invoking an agent.

Experienced workers appear to play an outsized role in setting these norms. Senior developers not only accept agent-generated output at higher rates but also demonstrate more consistent planning behavior, making them effective internal guides as teams navigate new workflows. Their habits — formed from years of explaining intent to colleagues and evaluating code — often carry over cleanly to AI systems.

Another takeaway is that restricting AI agents to engineering teams may leave productivity gains on the table. Designers and product managers used the tool frequently in the study, sometimes even more than software engineers, and often accepted more generated output. Early adoption among consulting and design groups points to broader applicability beyond traditional technical roles.

Despite the bump in output, firms still need guardrails. The study found no immediate deterioration in software quality, but long-term maintainability requires oversight. Companies in the dataset relied on lightweight checks—reviews of test coverage, file changes, or architectural implications—rather than new layers of bureaucracy, a balance that kept confidence high without slowing development.

Finally, the research suggests that measuring adoption is part of the adoption. Some organizations tracked agent usage, acceptance behavior, and output trends to identify bottlenecks or uneven uptake across teams. Treating agent interaction patterns like any other operational metric helped firms understand where workflows were working—and where they needed reinforcement.

It’s important to note that this is a working paper and has yet to be peer reviewed. At best, the working paper offers early evidence, limited to software teams and firms that adopted the agent. But it offers a rare, quantified look at how agents change worker cognition, organizational output, and skill differentials.

If similar patterns hold across writing, analytics, accounting, law, and other fields where work can be expressed in natural language, then agents — not base models — may prove to be the true economic engine of the next decade.