Insider Brief

- Ant Group introduced LingGuang, a multimodal AI assistant that generates answers as 3D models, animations, real-time visual analysis and code-built flash apps in under 30 seconds, processing language, images, audio, data and application code through a unified modular framework.

- The system’s three core features include Fast Research for dynamic 3D and illustrated explanations, Flash App for instant no-code mini-apps, and AGI Camera for real-time scene understanding and on-the-fly image or video generation.

- Ant Group positions LingGuang as China’s first broadly accessible AI tool enabling non-technical users to create functional applications and interact with AI across multiple formats in a single workflow.

Ant Group launched LingGuang, a multimodal AI assistant designed to move beyond text-based chat by delivering answers as 3D models, animations, real-time visual analysis and code custom flash apps in just 30 seconds. Built to interpret and produce language, images, audio, data and application code, LingGuang processes user queries through a modular framework that assembles responses across multiple formats at once, according to the company.

The system centers on three features. “Fast Research” pulls information from a topic and renders it as dynamic visual content, including 3D digital models of landmarks or historical subjects and generative illustrations that break down complex ideas such as quantum entanglement or economic principles, according to the company. Users can also navigate interactive maps to plan routes or explore local services.

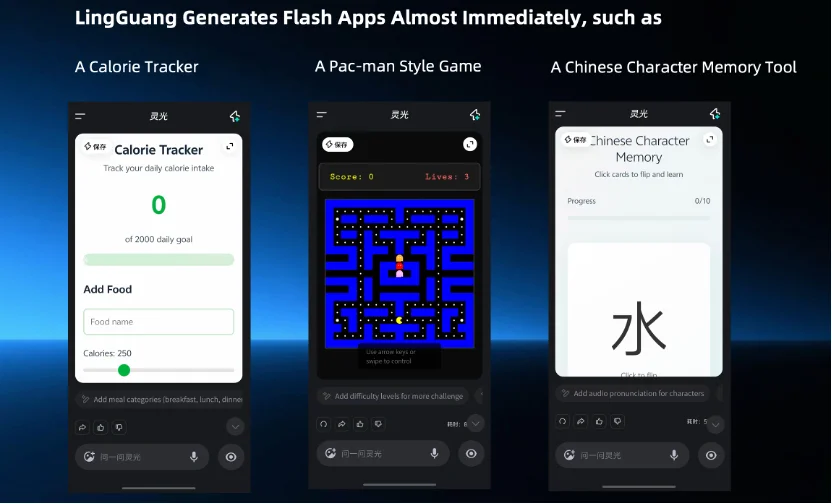

“Flash App” uses LingGuang’s native coding engine to build functional mini-applications directly inside the conversation. With simple prompts, users can generate custom tools for fitness tracking, budgeting, trip planning, meal suggestions or shopping assistance, often in under 30 seconds. Ant Group positions this as the first broadly accessible AI in China that allows non-technical users to produce and run personalized applications instantly.

It’s “AGI Camera” extends LingGuang’s multimodal abilities to real-world imagery, interpreting photos and video in real time and providing contextual understanding of scenes, the company said. The system can detect objects, assess conditions, and execute on-the-fly editing or generation of new images or clips, blending analysis and creative output within a single workflow.

“At Ant Group, we believe Artificial General Intelligence should be a public good — something that benefits everyone, not just experts,” He Zhengyu, Chief Technology Officer of Ant Group, noted in the announcement. “LingGuang is bringing every user their own personal AI developer: someone who can code, create visuals, build apps, and turn complex ideas into simple solutions—right in your pocket.”

Image credit: Ant Group