Luminal, an AI infrastructure startup focused on accelerating model inference through advanced compiler optimization, has raised $5.3 million in seed funding to address one of the most persistent bottlenecks in AI compute: developer usability. The round was led by Felicis Ventures, with participation from notable angel investors including Paul Graham, Guillermo Rauch, and Ben Porterfield.

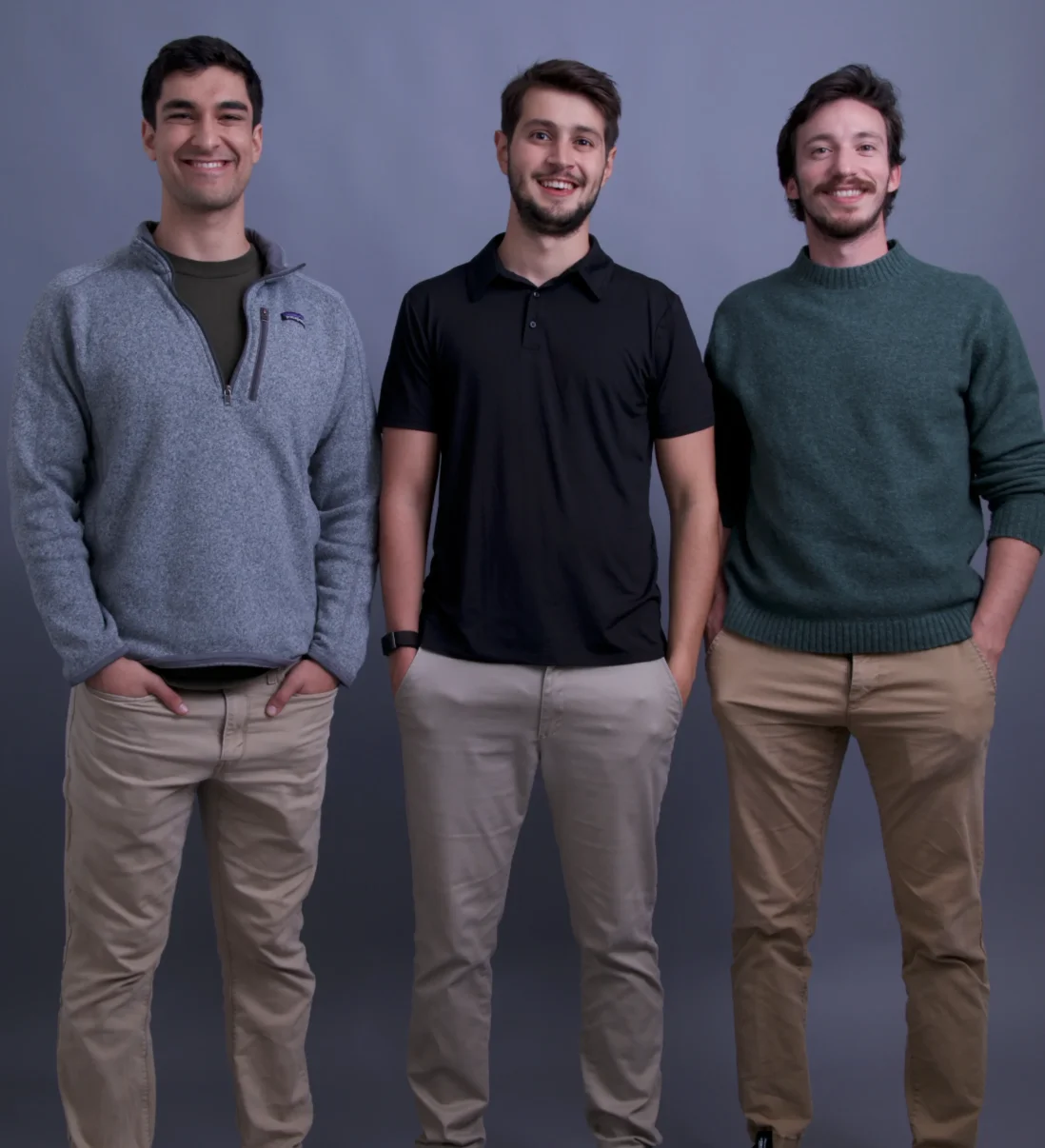

Founded by former Intel engineer Joe Fioti alongside co-founders from Apple and Amazon, Luminal emerged from Y Combinator’s Summer 2025 cohort with a mission to unlock more performance from existing GPU infrastructure. Instead of competing on hardware, the company builds optimization systems that sit between model code and GPU execution, enabling customers to run workloads faster and more efficiently.

Luminal targets the inefficiencies left unaddressed by legacy systems such as CUDA and enters a rapidly growing segment of inference-optimization startups responding to global GPU scarcity. By enhancing compilers and runtime systems, Luminal provides developers with higher throughput and lower compute costs across diverse model architectures.

The new capital will support product development and customer expansion as AI labs and enterprises seek alternatives to slow, GPU-dependent workflows. Luminal aims to establish itself as a critical layer in the AI compute stack, improving accessibility and performance for teams deploying large-scale models in production environments.

Featured image: Credit: Luminal