Insider Brief

- Universal Robots introduced the UR AI Trainer at GTC 2026, an imitation learning system designed to shift robotics from pre-programmed workflows to AI-driven tasks using production-grade hardware.

- The platform captures synchronized motion, force and visual data through a leader-follower setup, addressing gaps between lab-based training and real-world deployment while enabling more effective training of vision-language-action models.

- Built with Scale AI and running on UR’s AI Accelerator, the system creates a continuous data feedback loop to improve model performance over time and support scalable deployment in industrial environments.

Universal Robots has introduced an imitation learning system to move robots from pre-programmed to AI-driven tasks.

At GTC 2026, the company, part of Teradyne Robotics, unveiled the UR AI Trainer, developed with Scale AI and said the system enables developers to collect synchronized motion, force and visual data directly from industrial robots, addressing a persistent limitation in robotics training pipelines.

According to Universal Robots, much of today’s AI training data is captured on research platforms that do not translate cleanly to factory environments. The AI Trainer is intended to bridge that gap by allowing teams to train models on production-grade robots, reducing the disconnect between lab development and real-world deployment.

“Our customers, ranging from large enterprises to AI research labs, are no longer just asking for AI features,” VP of AI Robotics Products Anders Beck said in the announcement. “They need a way to collect high-fidelity, synchronized robot and vision data to train AI models on the same robots they intend to deploy. Our AI Trainer is the industry’s first direct lab-to-factory solution for AI model training.”

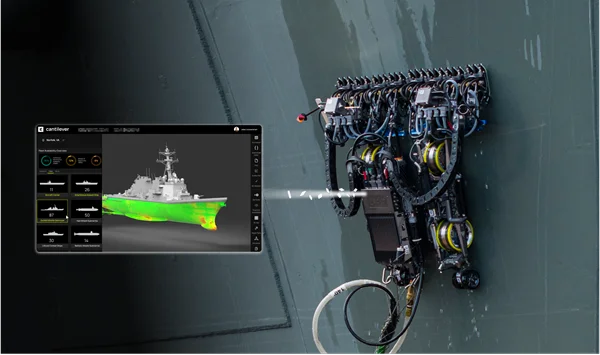

The system uses a “leader-follower” setup in which a human operator guides one robot through a task while a second robot mirrors the motion in real time. During this process, the platform records multimodal data, including physical interaction forces, to create structured datasets used to train vision-language-action models. Universal Robots said this approach is designed to improve performance in tasks that require precision and contact, areas where vision-only systems often fall short.

The platform runs on Universal Robots’ AI Accelerator and integrates Scale AI’s data infrastructure, enabling what the companies describe as a continuous feedback loop between data collection, model training and deployment. The goal is to create a data pipeline that improves over time as more real-world interactions are captured.

“Universal Robots is a leader in industrial robotics, and its global footprint offers the ideal foundation for data capture and AI deployment,” noted Ben Levin, General Manager, Physical AI at Scale AI. “Together, we’ve created an integrated robotics data flywheel, allowing customers to train, deploy and improve their AI models faster than ever before.”

Later in 2026, the companies will release a “large-scale industrial dataset collected on UR robots.”

Universal Robots said it is also exploring the use of Nvidia’s simulation tools to supplement real-world data with synthetic datasets. The company indicated that combining simulation with physical data capture could help scale training while maintaining accuracy in complex environments.

In parallel with the AI Trainer, Universal Robots demonstrated a robotic foundation model from partner Generalist AI, with two robots completing a multi-step smartphone packaging task that required coordination and fine manipulation.

Image credit: Universal Robots