Insider Brief

- A Harvard study proposes a “cy-trust” framework for multi-agent AI systems, arguing current cybersecurity approaches are insufficient for real-world deployment and safety.

- The framework assigns trust scores to data between autonomous agents, helping systems such as robots and self-driving vehicles detect malicious or unreliable inputs and avoid cascading failures.

- Researchers demonstrated improved resilience in lab tests and emphasized that trust mechanisms and policy frameworks will be critical as AI systems scale into real-world environments.

A new Harvard study proposes a framework for embedding trust into multi-agent AI systems such as robots and autonomous vehicles and that current approaches are insufficient for real-world deployment and safety.

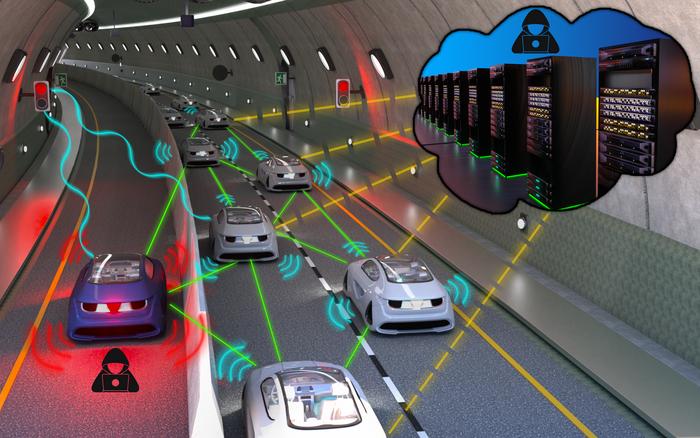

According to Harvard, researchers from the John A. Paulson School of Engineering and Applied Sciences (SEAS) and a multi-university team outline a concept called “cy-trust,” a quantitative method for determining how much one autonomous system, such as a robot or vehicle, should trust data or instructions from another. The work focuses on cyber-physical systems, where AI-driven machines must coordinate in real time, including applications such as self-driving vehicle fleets, smart power grids and robotic networks.

The study, published in Proceedings of the IEEE, found that traditional cybersecurity models, which emphasize access control and data protection, do not address a central challenge in these environments: whether incoming information is reliable enough to act on. In multi-agent systems, incorrect or malicious data can propagate quickly, leading to cascading failures in physical environments.

“Cyber-physical systems are going to become very pervasive,” noted Stephanie Gil, the John L. Loeb, and SEAS associate professor of engineering and applied sciences and associate faculty member in the Kempner Institute. “The question is, how do we secure these systems? How do we make sure they are going to be resilient as they go into the real world? This is something we had to learn from making internet systems secure.”

The researchers identify several risks unique to these systems, including adversarial agents that manipulate shared data, falsify locations or exploit coordination rules. These failures can have real-world consequences, from traffic disruptions in autonomous vehicle networks to gaps in coverage during search-and-rescue operations.

To address this, the proposed cy-trust framework assigns each data input a numerical trust score between zero and one, based on factors such as sensor validation, network behavior, context and historical reliability. These scores determine how much influence each piece of information has on system decisions, allowing agents to discount or ignore potentially compromised inputs.

The study also highlighted the role of onboard sensing such as cameras, lidar, radar and GPS as a mechanism for cross-validating external data. By combining local sensing with signal processing of wireless communications, systems can verify whether multiple data sources are genuinely independent or originate from a single malicious actor.

Experimental validation in lab environments demonstrated how the approach can improve system resilience. In tests involving coordinated robot groups, systems using cy-trust were able to detect and isolate malicious agents attempting to disrupt operations through spoofed identities or falsified signals. Over time, the system learned to ignore compromised inputs while maintaining coordinated behavior among trusted agents, the researchers said.

Beyond technical implementation, the researchers emphasize that trust frameworks must also be incorporated into policy and regulatory structures. As multi-agent AI systems expand into public environments, from autonomous ride-hailing fleets to logistics and industrial automation, ensuring reliability and safety will be critical for adoption.

The study concluded that embedding trust directly into system design will be essential as AI systems transition from controlled environments to real-world deployment. Without such mechanisms, the increasing scale and autonomy of connected systems could amplify vulnerabilities, limiting their effectiveness and public acceptance, researchers said.

The paper’s co-authors are Michal Yemini of Bar-Ilan University in Israel; Arsenia Chorti of ENSEA in France; Angelia Nedic of Arizona State University; Vincent Poor of Princeton University; and Andrea J. Goldsmith of Stony Brook University.

“As we move into a world where so many of our physical systems consist of multiple agents controlled by AI in the cloud, we require a rigorous framework for their design that is secure and robust against malicious agents,” noted Goldsmith.

Image credit REACT Lab / Harvard SEAS