Insider Brief

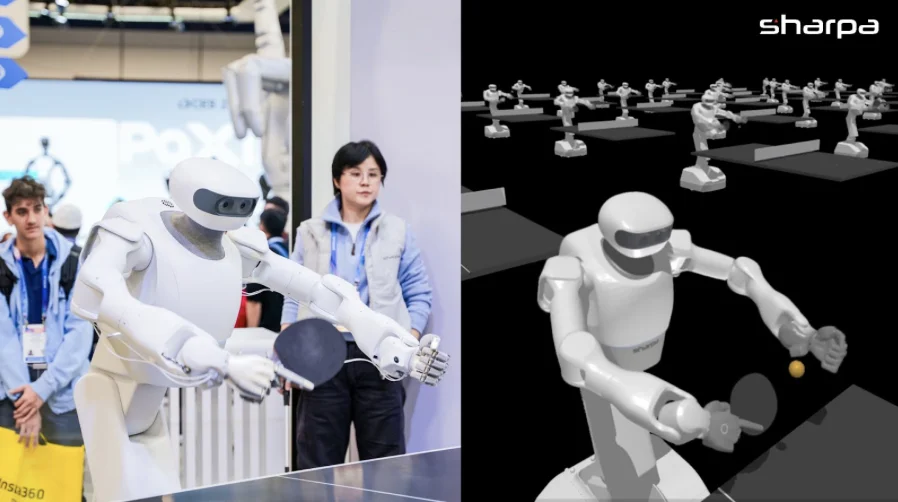

- Sharpa is presenting new research with Nvidia showing improved simulation methods for training robots to perform complex manipulation tasks.

- The collaboration produced Tacmap, a high-fidelity tactile simulation framework designed to balance physical realism with computational speed for robotics training.

- Nvidia researchers also demonstrated that robots equipped with Sharpa’s Wave robotic hands achieved a 54% higher task success rate when using policies trained on more than 20,000 hours of human video data.

PRESS RELEASE — Sharpa presents new research demonstrating significant improvements in simulation methods for robot training, in collaboration with Nvidia.

Sharpa aims to accelerate the deployment of robots capable of complex manipulation tasks across consumer and enterprise markets. To achieve human-like dexterity, Sharpa leverages simulation either by training the robot on single tasks using Reinforcement Learning (RL) in virtual environments; or by generating synthetic data used to pre-train its Vision Tactile Language Model (VTLA). Standard tactile simulations typically force a choice between physical authenticity and computation speed. Tacmap, a simulation framework developed in cooperation with Nvidia, solves this issue through a shared, high-fidelity geometric representation. The simulation and code assets will be open sourced to spread the learnings with the broader robotics community.

“This collaboration strengthens the foundation for training in simulation, advancing the robotics field towards more dexterity and autonomy and accelerating large-scale deployment,” said Alicia Veneziani, Global VP of Go-To-Market and President of Europe at Sharpa.

In addition, Sharpa is proud that its dexterous hand Wave has been chosen by Nvidia to advance research on data efficient learning. Nvidia’s GEAR Lab researchers successfully transferred policies, obtained from pre-training GR00T model on 20,000+ hours of human videos, to robots equipped with Sharpa’s Wave hands. The robots were able to complete tasks like assembling model cars, operating syringes, and sorting cards with a 54% higher success rate, proving that video data-based training can be effectively scaled on robots with highly anthropomorphic hands.

Sharpa, a recipient of the iF Product Design Award, and CES Innovation 2026 Award, will present its dexterity-first, full stack solutions at GTC 2026, demonstrating how human-like hands coupled with tactile VTLA can accelerate the deployment of productive robots. Sharpa is excited to announce its membership in the Nvidia Inception program.

- North, a general-purpose humanoid robot integrating whole-body control and fine loco-manipulation

- Wave, a human-scale robotic hand engineered with 22 active degrees of freedom and tactile sensors

- CraftNet, a VTLA model including a Motion Brain (System 1) that directs movements and an Interaction Brain (System 0) that governs the contact with objects, enabling millimeter-level precision interaction. Craftnet optimises training data by mapping tactile signals onto human video and glove-acquired data.

Image credit: Sharpa